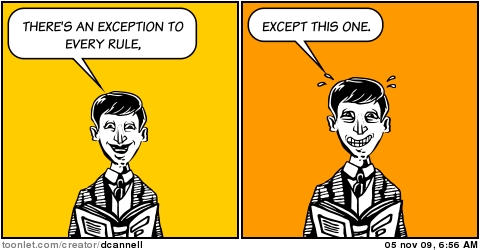

Exceptions do not follow the rules. That is their nature. Humans know how to handle them. Can that be learnt?

Exceptions do not follow the rules. That is their nature. Humans know how to handle them. Can that be learnt?

Learning a rule

One of the first real world datasets I had to handle consisted of the examination results of the two-year propedeuse in physics. Students passed or failed depending on their scores for 15 topics given by marks between 1 (lowest) and 10 (highest). There were no written rules for the decision. A new member of the examination committee wondered whether the rules could be reconstructed in retrospect based on results over a number of years. (This was long before the university managers introduced the habit of permanently changing the curriculum, making any evaluation impossible).

The dataset consisted out of hundreds of student results given by 15 features (their marks) and labeled in two classes: fail or pass. The dataset was repeatedly split in sets for training and testing. Linear and non-linear classifiers have been studied, based on approximated normal distributions, on Parzen density estimators or the nearest neighbor rule. Very popular in those days was, in our group, the polynomial classifier by Specht. An obvious choice would have been the decision tree. Strange enough it was not in use in pattern recognition at that time. Instead we used a simple predecessor based on bounded boxes aligned with the feature axes.

Results with an exception

The results were very good. Just a few errors in the test set. Most of them were really boundary cases, but there was one very clear exception. One student with many very high marks (9’s and 10’s), but with a 2 for one of the key courses, had passed. There was even no other student that passed with a mark lower than a 5. This object was far away from any decision boundary. How was this possible? Was there a copy error? The database was based on handwritten files and copied to paper tape for the computer classification experiments. Checking made clear that there was no copy error. The low mark was real and known to the examination committee.

Committee members were not very talkative. Not everybody liked the idea to learn their rules from their data. Moreover, there is a privacy issue. So it was not possible to obtain an explanation other than: It is the task of the committee that the right students pass.

Exception handling

The examination committee consisted of staff members who were teaching themselves some of the subjects. Thereby they knew many students personally. Especially somebody with exceptionally high marks would have drawn the attention. It would have been a waste to let such a student redo examinations that he had passed already gloriously, which would result into a delay of half a year. So it seemed to be likely that based on observations outside the examination results a decision was made in a case that was clearly exceptional.

Also in our data analysis the case was exceptional. The feature vector representing this student was far away from any other object. It was in an empty area. May be if the dataset would have been much larger some other similar examples would have been available. For the given dataset it was not possible to obtain a generalization that would cover the exceptional example.

Conclusion

In conclusion, it is not possible to learn from or for exceptional examples, almost by definition. The committee was able to classify such an example by additional information. They were able to detect it was exceptional, and so could we. Then they fell back on background information, but that was not available to us. The apparent solution for a pattern recognition system is to reject the example and return it to a human expert. Pattern recognition should have a reject option, especially if they concern humans.

But the committee, how did they know how to handle the exception? Did they learn this? from what? It is not very likely that they collected a number of cases like these and followed the careers of such students over the years. They apparently followed a reasoning that is not grounded on observations, simply because there is no possibility to collect it.

Filed under: Applications • Classification • History